evolving networks to have intelligence realized at nanoscale

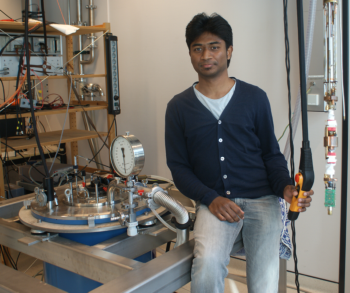

Celestine Lawrence is a PhD student in the research group Formal Methods and Tools. His supervisors are prof.dr.ir. W.G. van der Wiel and prof.dr.ir. H.J. Broersma from the faculty of Electrical Engineering, Mathematics and Computer Science (EEMCS).

The first large-scale electronic computer was built in times of the Second World War. Named as ENIAC (electronic numerical integrator and computer), the machine was as big as a stack of 100 humans. It was originally designed to calculate firing tables for artillery, a task humans were bad at. Fortunately, the war ended and subsequent generations of computers have enhanced many aspects of human activity and helped us achieve otherwise impossible tasks such as space exploration. Modern computers have shrunk to microprocessors smaller than the size of a thumb, and thus become much more quick and energy efficient. This technological advancement has made computers capable of operating artificial neural networks and to deliver applications like Microsoft’s Seeing AI which can narrate the scenery captured on camera to enrich the lives of the blind. This kind of AI (artificial intelligence) is made possible due to deep learning algorithms on artificial neural networks (which are mathematical models of computational networks similar to the brain). There is a buzzing development of computer chips that can efficiently implement artificial neural networks, and deep learning machines have recently achieved superhuman skills in playing strategic board games and video games, and have brought new knowledge and intuition to play these games. This is done by optimizing millions of synaptic weights in an artificial neural network, by backpropagation of errors in decision by adjusting synaptic weights layer-by-layer; going backward from the output layer through hidden layers and finally to the input layer. However, we are still a moonshot away from achieving what is known as artificial general intelligence, which also includes empathy and wisdom to make decisions between good and evil in the real world.

The first large-scale electronic computer was built in times of the Second World War. Named as ENIAC (electronic numerical integrator and computer), the machine was as big as a stack of 100 humans. It was originally designed to calculate firing tables for artillery, a task humans were bad at. Fortunately, the war ended and subsequent generations of computers have enhanced many aspects of human activity and helped us achieve otherwise impossible tasks such as space exploration. Modern computers have shrunk to microprocessors smaller than the size of a thumb, and thus become much more quick and energy efficient. This technological advancement has made computers capable of operating artificial neural networks and to deliver applications like Microsoft’s Seeing AI which can narrate the scenery captured on camera to enrich the lives of the blind. This kind of AI (artificial intelligence) is made possible due to deep learning algorithms on artificial neural networks (which are mathematical models of computational networks similar to the brain). There is a buzzing development of computer chips that can efficiently implement artificial neural networks, and deep learning machines have recently achieved superhuman skills in playing strategic board games and video games, and have brought new knowledge and intuition to play these games. This is done by optimizing millions of synaptic weights in an artificial neural network, by backpropagation of errors in decision by adjusting synaptic weights layer-by-layer; going backward from the output layer through hidden layers and finally to the input layer. However, we are still a moonshot away from achieving what is known as artificial general intelligence, which also includes empathy and wisdom to make decisions between good and evil in the real world.

The challenge is to make neuromorphic hardware that is as large-scale as the human brain which consists of neurons with an average of synapses per neuron. In comparison, IBM’s TrueNorth which is the largest neuromorphic chip since the past four years, has only neurons and synapses per neurons, implemented on a thumb-sized chip packing transistors based on 28 nm CMOS technology. Shrinking the feature sizes even further, to achieve greater packing density, leads to imperfections and other unintended physical effects, thus beyond the scope of human-design. To resolve this, we recall the ways of nature!

Natural computing systems are a product of evolution. Evolution uses whatever physical processes are exploitable. So, instead of making electronic circuits that have design rules to exclude physical processes such as capacitive crosstalk, it seems more natural to use matter in its designless form and evolve it for computation. Here, we show that the electronic properties of nanomaterial clusters can be evolved to realize Boolean logic gates. The cluster is designless in the sense that it is a disordered assembly of particles. Clusters of nanoparticles are tested at 300 mK and it is discovered that our system meets the essential criteria for the physical realization of neural networks: universality, compactness, robustness and evolvability. The same is repeated for clusters of dopant atoms in silicon at 77 K.

For functionality such as image recognition, we design a large-scale small-world architecture that interconnects several nanomaterial clusters by electronic switches whose configuration is to be evolved by a computer server. By modelling the nanomaterials system as a single-electron tunnelling (SET) network, we find in simulations that the system is evolvable to realize inhibition, an important neural network property. For simulations of large-scale SET networks, we develop a novel mean-field approximation and compare its accuracy with a standard Monte Carlo method. Our mean-field method is typically 10-100 times faster for simulating the current-voltage relation of disordered SET networks.

In our quest to realize intelligence, it is useful to have a mathematical definition and a practical measure. To that end, we model intelligence as the capacity to relate patterns to data. We map input-output relations of a physical system to a table of datum-pattern relations, from which we calculate an IQ metric.

Reflecting upon recent trends in computing, we witness a designless software revolution with the rising applications of artificial neural networks. So, it is only natural that a designless hardware revolution follows, and hopefully gives birth to an ENTHIRAN (evolved network that has intelligence realized at nanoscale) ●