Sometimes you need to get human knowledge and skills to places that are hazardous or difficult to access for people. The project entitled Predictive Avatar Control and Feedback (PACOF) is creating a robotic system that allows the robot operator to experience the location just as the robot does. Three researchers representing the three different disciplines of the University of Twente’s EEMCS faculty are working together in this project.

Avatars

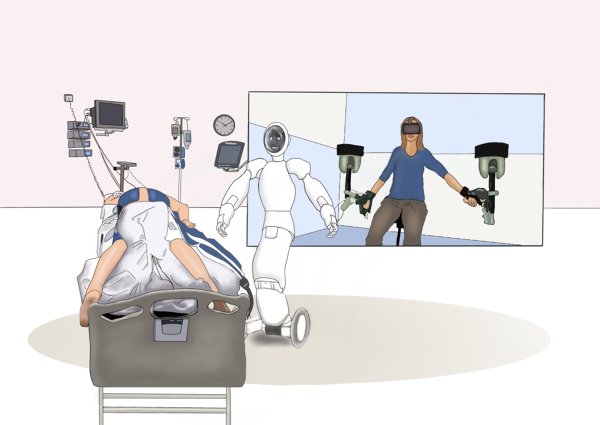

The ‘avatars’ being built by Dr Douwe Dresscher, Dr Felix Schwenninger and Dr Gwenn Englebienne are a bit like the blue humanoids in the film of the same name. “Like these fictional characters, we are working on a similar control system that will let the operator feel like he or she has taken the place of the robot,” says Dresscher.

Robots are already being developed for home care in Norway, but they are not yet as personal as they could be. In this sparsely populated country, the avatars could help to bridge the enormous distances by letting carers see and interact with their patients through the robots. The avatars can also be used for hazardous work such as loading and unloading oil tankers in the Port of Rotterdam. “Hazardous substances must be handled by operators with the right expertise. Using an avatar, you can get the necessary skills to the oil tanker without having to endanger people,” said Dresscher.

How it works

Three things have to happen for this to work. First of all, the operators must be completely isolated from the outside world. Secondly, they must experience realistic stimuli to create a virtual world. “This means more than just images and sound projected through VR glasses, but also includes things like odour, temperature and the counterpressure you experience when you push against an object,” says Dresscher. “We want the operator to feel that he or she is somewhere else; it must feel like the robot is his or her own body.” Finally, the controls must be intuitive and must result in almost identical movements in the avatar.

Delay

In the PACOF project, the researchers will be focussing on the latter challenge. “We can give the operator robotic arms that they can control, but due to delays in the network, when the operator makes a movement there is also a delay before the robot replicates it,” says Dresscher. Likewise, the feedback provided by the robot takes a little while to reach the operator. This makes controlling such a system more awkward and so limits what you can do with it.

Predicting the future

The delay could be solved by getting the robot to predict what the operator will do; the robot can be modelled to execute a movement even before it receives the signal from the operator. “At the same time we will also try to predict how the robot’s environment will respond and integrate this in the feedback to the operator, so they do not notice the delay. This also means that we have to consider what will happen if the robot makes a wrong prediction: How can the robot resolve the mistake and what kind of feedback will the operator need to receive?” Dresscher says.

Disciplines working together

This four-year project is being funded by the EEMCS faculty. Three doctoral candidates will be appointed to this project. These so-called ‘theme call’ projects actively bring together various disciplines of the faculty, such as mathematics, computer science and sensor networks. Dresscher is working on the connection between the operator and the avatar, Schwenninger is focussing on the mathematical models and Englebienne is studying how to predict the behaviour of the operator.

“This is a unique opportunity to work more closely with colleagues from my own faculty,” says Dresscher. “You usually work with colleagues from outside your own faculty or university on project proposals. I had already met Felix through teaching, but I did not know Gwen yet. We are learning a lot from each other and already form a good team. The faculty board gives us the freedom and resources to bring out the best in ourselves.”

More recent news

Thu 11 Dec 2025Three new NAE Fellows and Young Engineer with UT background

Thu 11 Dec 2025Three new NAE Fellows and Young Engineer with UT background Thu 11 Dec 2025Two UT projects receive Perspectief grant

Thu 11 Dec 2025Two UT projects receive Perspectief grant Fri 5 Dec 2025Transforming urban policy for a healthier and safer Enschede (and other cities)

Fri 5 Dec 2025Transforming urban policy for a healthier and safer Enschede (and other cities) Fri 28 Nov 2025UT celebrates 64th Dies: a look at the hospital of the future

Fri 28 Nov 2025UT celebrates 64th Dies: a look at the hospital of the future Fri 28 Nov 2025Van Damme scholarship for Lisa Deijlen and Wietske Woliner

Fri 28 Nov 2025Van Damme scholarship for Lisa Deijlen and Wietske Woliner