Indoor 3D reconstruction of buildings from point clouds

Due to the COVID-19 crisis measures the PhD defence of Shayan Nikoohemat will take place (partly) online in the presence of an invited audience.

The PhD defence can be followed by a live stream.

Shayan Nikoohemat is a PhD student in the Department of Earth Observation Science (EOS). His supervisor is prof.dr.ir. M.G. Vosselman from the Faculty of Geo-Information Science and Earth Observation (ITC).

The developments of 3D acquisition systems for indoor environments has increased in last year. Among them, the emerge of mobile laser scanners (MLS) and low-cost sensors for scanning interiors of large buildings and providing 3D scans (point clouds and RGBD images) enable architects, engineers, and managers to access affordable digital twins of the buildings in a short time. However, such improvements come at the cost of tackling a large amount of data in forms of point clouds and images. Users in the architecture, engineering, and construction (AEC) domain prefer a compact and light version digital representation of buildings instead of a large number of point clouds. Thus, the problem of designing (semi-) automatic methods for converting 3D scans to semantically rich 3D models raised in recent years. In the literature, this problem is addressed as scan-to-bim (Building Information Models) and as-is vs. as-built. However, this manuscript tries to go beyond providing just a BIM model by also studying the best practices to keep such 3D models up-to-date, and monitoring the changes during the building lifetime as well as investigating the compliance of the output with the standards and applications.

The developments of 3D acquisition systems for indoor environments has increased in last year. Among them, the emerge of mobile laser scanners (MLS) and low-cost sensors for scanning interiors of large buildings and providing 3D scans (point clouds and RGBD images) enable architects, engineers, and managers to access affordable digital twins of the buildings in a short time. However, such improvements come at the cost of tackling a large amount of data in forms of point clouds and images. Users in the architecture, engineering, and construction (AEC) domain prefer a compact and light version digital representation of buildings instead of a large number of point clouds. Thus, the problem of designing (semi-) automatic methods for converting 3D scans to semantically rich 3D models raised in recent years. In the literature, this problem is addressed as scan-to-bim (Building Information Models) and as-is vs. as-built. However, this manuscript tries to go beyond providing just a BIM model by also studying the best practices to keep such 3D models up-to-date, and monitoring the changes during the building lifetime as well as investigating the compliance of the output with the standards and applications.

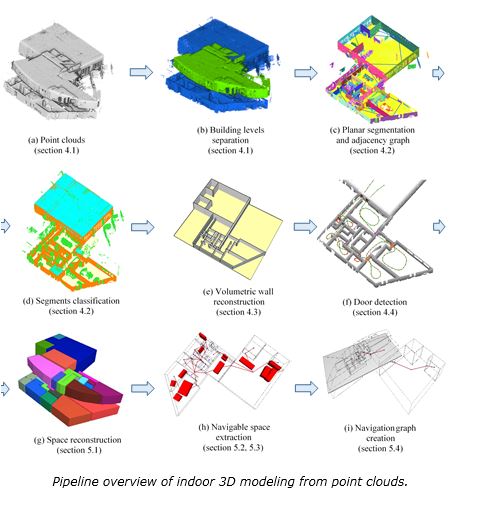

This thesis has three main parts: the first part, explains the motivation of this PhD work, provides a review of current data acquisition devices and 3D indoor standards and the modeling methods in the related work, and summarizes the open challenges. In the second part (chapters 3 and 4), the main pipeline for indoor 3D reconstruction from point clouds is further developed and discussed. The last part, including chapter 5 and 6, investigates the considerations need to be taken after the creation of a 3D model from scans. This contains consistency control and compliance of 3D models with indoor standards (IndoorGML and IFC). Furthermore, monitoring the changes of buildings without the need to scan the whole complex after each renovation and discovering the type of changes (temporary or structural changes) are described in this last part.

The goal of this research is not only creating 3D models from point clouds but advancing the state-of-the-art and tackling the shortcomings of previous research. In this regard, addressing open challenges such as incomplete data because of cluttered environment, fictitious data because of reflective surfaces, modeling of non-Manhattan World structures and avoiding the assumptions of vertical walls and horizontal ceilings were main concerns of our work. Four objectives are proposed to engage in these open problems: semantic labeling, geometric modeling, watertight 3D model reconstruction, and consistency control of 3D models. The first objective contributes to the problem of the classification of indoor point clouds. The proposed solutions aim at discerning the permanent structures, including three classes of walls, floor and ceilings, from the clutter (noise and furniture). Several heuristic methods with the support of creating an adjacency graph are developed which exploits the topology of manmade structures. The solutions prove that the trajectory of the mobile laser scanner is beneficial in understanding the indoor scenes. The result of semantic labeling reaches an accuracy of an average 95% for permanent structures, tested in six different use cases with complex architectures and a high amount of glass surfaces and clutter.

Moreover, for disaster management applications, methods are developed for modeling stairs in multistory buildings, modeling furniture as obstacles, and adding doors. These are supported by a fine-grained space subdivision based on the enclosure of space, i.e. the connection of walls, floors and ceilings form a closed space. Space subdivisions are further divided into subspaces by including the furniture in the process. Finally, we demonstrate the robustness of our algorithms on four complex multistory buildings. The contributions of this part are modeling the interiors with and without the furniture for advanced navigation networks and modeling both volumetric walls (complying with BIM models) and volumetric spaces (complying with IndoorGML models). By comparing our models with handcrafted BIM models, we showed that our pipeline reaches an accuracy of 90% in modeling the rooms and doors and this includes detecting some of the closed doors. Unlike other related works that use 3D models only for BIM or for navigation purposes, our results demonstrate real-world examples from point clouds (no synthetic model) for both applications.

As a conclusion, the methods developed in this research show that there is a great potential in the automation of scan-to-bim and creating as-is models even from complex architectures. The future work should be dedicated to adding level-of-details such as the type of furniture and function of the rooms. Another line of research can be applying deep learning methods for early-stage classification of the point clouds before the modeling step. Moreover, stitching indoor 3D models to the exterior model of buildings provides a seamless reconstruction of large-scale city 3D models.