SHAPING THE FUTURE OF OUR DIGITAL SOCIETY

We live and work in the exciting age of digital transformation. The University of Twente’s mission as a people-first university of technology places us in the crossfire of digital advancement and the disruption it can cause. As scientists and tech pioneers, our task is to drive digitalisation. In close cooperation with all our stakeholders. Next to other institutes and faculties at the University of Twente, these include business & industry, government, NGO's and knowledge institutes. As a partner in regional, national and international ecosystems, we offer the knowledge, education and infrastructure for the development of successful solutions and products. In doing so, we focus on five themes: Data Science & AI, Smart Industry, eHealth, Robotics and Cybersecurity. We boost innovation by delivering scientific knowledge for real-life solutions that have societal and economic relevance. Our research focuses on natural, societal and industrial challenges, which serve as starting points for our way to work and have one common denominator: digital technologies. Our work contributes to solving three main challenges.

Our themes

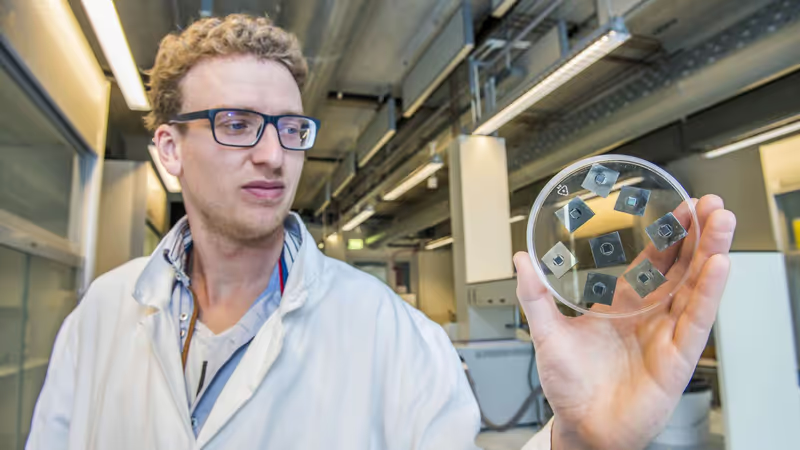

Smart innovations in manufacturing are key to securing the welfare and wellbeing of society. Smart industry is the way forward for industry. Using Smart Industry means personalized and smart products, optimizing human-machine interaction, yielding faster, cheaper, and more sustainable production.

Digitalisation of society provides a treasure trove of data, based on an abundance of sensors and the endless possibilities for people to connect via websites and social media. Data science and artificial intelligence offer many possibilities to interpret data properly, learn from it and use it to achieve specific goals and tasks.

The Twente University Centre for Cybersecurity Research (TUCCR) is a public-private partnership where experts, professionals, entrepreneurs, researchers, and students from industry and knowledge partners collaborate to deliver talents, innovations, and know-how in the domain of cybersecurity. The mission of TUCCR is to strengthen the security and digital sovereignty of our society by performing top-level research on real-world data and network security challenges.

Robotics is transforming many industries. It is clear that robotics technology has a huge impact on a broad range of end-users markets and applications, which will continue to grow. In the years to come, robotics will (start to) influence our daily lives in several shapes and forms, including healthcare, agriculture, civil, commercial and consumer sectors, logistics and transport.

How we can help you

Our partners and stakeholders approach us with all kinds of questions. These include requests to develop a vision of current and future developments in digitalization in specific fields. Companies also approach us to future-proof their strategies, products and solutions. DSI acts as an innovation hub by connecting the knowledge and expertise within the University of Twente to the questions of the partner or stakeholder.