Students, society, and the labor market need to be able to trust the value of a diploma. To safeguard the value of the diplomas is an effort of the programme management as well as the examination board.

Students, society, and the labor market need to be able to trust the value of a diploma. To safeguard the value of the diplomas is an effort of the programme management as well as the examination board.

The Student Charter governs the rights of students and the way we treat each other at the UT. The Student Charter consists of an institutional section, which applies to all students, irrespective of the programme, and the programme-specific section as described in the EER for the programme. The Student Charter describes what is considered academic misconduct and fraud.

The Examination Board (ExBo) of each programme specifies in its Rules & Regulations (R&R; or Rules & Guidelines) what they consider to be fraud, which may include additional provisions. In the R&R, they also set out what action will be taken in cases of (suspected) academic misconduct. In the case of suspicion of fraud, the fraud case must be reported to the ExBo. They will then investigate and determine whether the fraud occurred and what measures need to be taken.

It is important to make sure that students are well informed about what will be seen as fraud (including plagiarism and free riding) and what the consequences may be. As an example, the faculty of BMS has set up a website on scientific misconduct to inform the students and a site to inform examiners.

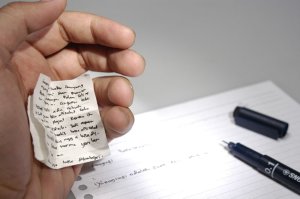

Fraud may occur when written tests are taken. The Assembly of Examination Board Chairs has developed a Rules of Order for written tests. This document describes the rules and procedures to be followed for written tests (including those that are taken digitally). It applies to tests in study programmes of which the Examination Board has adopted these rules as part of their Rules & Guidelines.

To make sure students are aware of what kind of behaviour is expected and allowed during test-taking, a cover sheet is recommended. An example of a cover sheet as used by BMS can be found [here].

For teachers, BMS also has a more elaborate Rules during Exams Guide, see: rules-during-exams-guide-for-examiners-2025-2026.pdf

Fraud case collection

This document contains a collection of concrete (anonymised) fraud cases. This collection is meant to increase transparency for:

- Students, to get a better understanding of what is considered to be fraud and what the consequences may be

- Examination Boards, to share insight into the practice across study programmes and enable a more uniform treatment of fraud cases

Disclaimer: It is important to use this overview only for the intended purpose. In particular, no prediction may be derived regarding individual (new) fraud cases: every case is different and will be judged on its characteristics. The final decision always rests with the Examination Board of the study programme in question.

Interesting resources

What can be done to secure the testing process? What can be done to prevent or detect fraud? Here are some resources (NB. Not all are available in English):

- Werkboek Veilig toetsen. Surf (Dutch only) In order to support institutions in making the assessment process safe, SURF, together with experts from various higher education institutions, has developed the workbook Veilig Toetsen. The workbook contains tools for setting up the entire assessment process safely and applies to both the paper and the digital process.

- The EUR made a nice publication on fraud and plagiarism for students in English: 27434-16-Erasmus-ins.qxd:27434-16-Erasmus-ins. They also made a short video in English Fraud or plagiarism. It doesn’t make you any better!

- The University of Berkeley has a very informative site about fraud: Academic Misconduct: Cheating, Plagiarism, and Other Forms.

- This site is heavily sponsored, but provides some good insight in the reasons for plagiarism and tips for ways to prevent or detect plagiarism. https://virtualsalt.com/antiplag.htm

- More focused on scientific personnel, but also interesting: Netherlands Code of Conduct for Research Integrity | NWO or in Dutch Nederlandse gedragscode wetenschappelijke integriteit | NWO

- (Dutch) Book: TUSSEN FOUT EN FRAUDE. Integriteit en oneerlijk gedrag in het wetenschappelijk onderzoek. Kees Schuyt (2014). Leiden University Press.